You Underestimate the Importance of DR Testing

Well, at least 55% of you.

Over the last 4 years Unitrends and our competitors in the marketplace have released a countless number of products, preached about the importance of data protection, worked thousands of trade shows, and trained up tens of thousands of customers. You would think that all our effort is making a difference, but perhaps it isn’t.

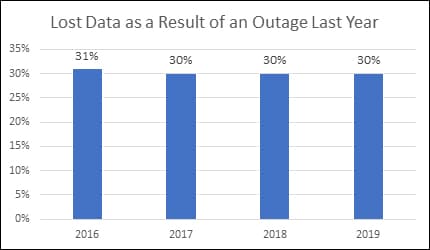

Every year Unitrends does a survey on the data protection and recovery. The 2019 survey found that, despite all the above efforts, organizations continue to lose data and experience downtime at alarmingly high rates. Consistently, 30% of responding organizations reported losing data as the result of an outage. This remains stubbornly high even as new data protection tools such as cloud storage, Disaster-Recovery-as-a-Service (DRaaS), and improved data backup appliances have emerged over this same time.

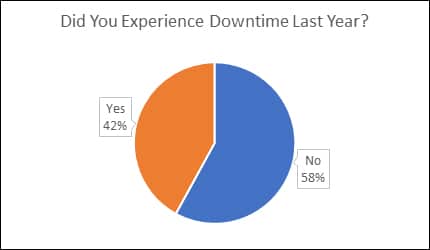

Additionally, over 40% of respondents reported having a period of downtime in 2019.

Despite all the new technologies, why is data loss and downtime continuing to plague enterprises? IT is certainly being squeezed in their headcounts and budgets, IT infrastructures are becoming more complex with computing and data moving to the cloud, and there has been a rise of SaaS apps. Threats are also increasing – who heard of ransomware 5 years ago?

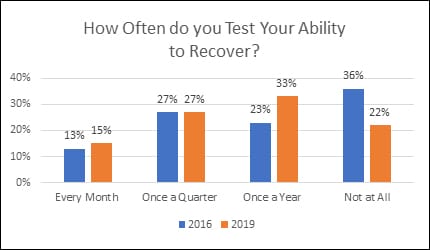

IT does share some of the blame. There is one factor in the direct control of IT that could greatly reduce downtime and data loss that is not being utilized. We asked survey respondents how often they test their ability to recover from an episode of downtime. The responses were surprising.

Only 42% of respondents reported testing their ability to recover once per quarter. An additional 33% test at least once per year and remaining 22% are living life on the edge by not testing at all. Even those testing just yearly are risking extended downtime as there are many changes made to IT infrastructure over that time. Think about how often you add a new server, move an application, add a VM, or change network configurations. Each of these changes can potentially disrupt failovers unless they have been added to the plans. The little good news from this survey question is that 12% more organizations at least test annually vs. 4 years ago. 55% of enterprises underestimate the importance of recovery testing.

IT professionals aren’t stupid. If testing is so important why don’t companies test more often? The reason is testing can be costly, difficult, and risk interrupting critical business processes. Fortunately, there are cost-effective, intelligent technologies available that can automate, orchestrate and analyze application recoverability to ensure entire workloads are functional and, if not, report what is broken. Additionally, they produce an easy to read, formal report certifying the final results of the test that can be shared with auditors and senior management. These tools automate testing, so you know exactly how fast and to what point your data and applications are protected without requiring much manual work or extra spending.

Unitrends Recovery Assurance delivers fully automated recovery testing. Running either locally on the backup appliance or in the cloud, Recovery Assurance will automatically test and certify full business recovery. Using backups, the entire infrastructure is recreated and booted up to ensure that all data and application dependencies are in place. You can even do on-demand tests after infrastructure enhancements to ensure nothing got broken.

Testing can be difficult, time consuming, and impact production servers or it can be free and automated with no negative impacts on the business. For more information on disaster recovery testing and how best-in-class companies do it well read Disaster Recovery Testing, Your Excuses, and How to Win.